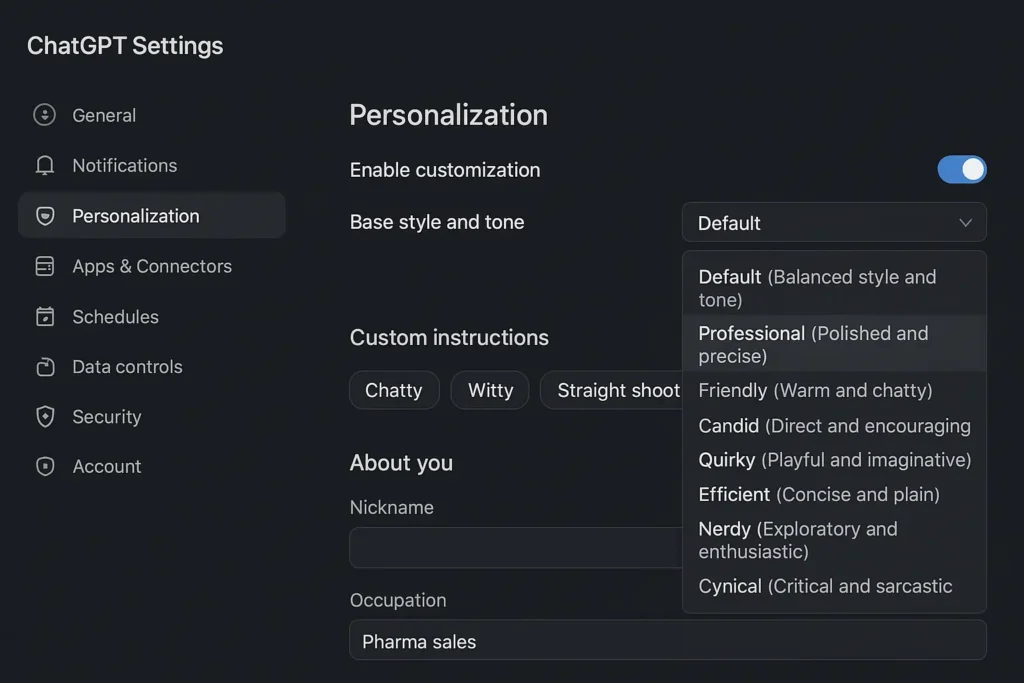

OpenAI launched GPT-5.1 on Wednesday, introducing seven new ChatGPT personality presets for users to toggle through, following intense backlash after the company replaced GPT-4o with GPT-5. The update offers personalization options including professional, friendly, candid, quirky, efficient, nerdy, and cynical choices through a drop-down menu. I tested all seven personalities with identical prompts to see which ones truly delivered—and two completely captured my attention.

OpenAI Unveils Significant Customization Tools

The AI company’s flagship model changes came after CEO Sam Altman faced heated questions during an August Reddit session, where one user claimed GPT-5 felt like a “lobotomized version” of the familiar bot. OpenAI now lets users switch between models including GPT-5 and older models through the tools section, with the release promising improvements in instruction following and adaptive thinking.

Matthias Scheutz, director of human-AI interactions at Tufts Institute, a customizable chatbot could increase user attachment to specific personalities—a “double-edged sword” when OpenAI decides to change or remove functions. He explained it’s part of our evolutionary history that we see agents automatically, referring to any subject with individual thought, goals, and motivations. Better interaction makes this natural automatic agency perception and projection stronger with these artifacts, even though they don’t actually possess these qualities.

Explaining Facts: Where Personalities Shine

For my first test, I asked a straightforward question requiring a fact-based response: how does buying an EV work? I wanted each preset to explain the mechanism of how an electric car works compared to a traditional engine using electricity.

The Engineer and Science presets gave long-winded, jargon-filled responses where I got lost in technical details about inverters that convert DC from the battery to alternating current for the electric motor, plus mentions of EVs using single-speed reduction gears. The Efficient, Friendly, and Candid presets offered concise answers with practical follow-ups about emissions comparison to fuel-powered cars.

Cynical and Quirky truly captured my attention with longer responses. Quirky’s answer gave me a peek under the hood of how the vehicle systems hum quietly to life. It didn’t break into excessive emojis, but explained how electrical energy flows from storage into motion while implied superiority over gas-powered vehicles. “Ditch the gas pump and embrace the quiet future,” the bot suggested, making the choice feel exciting. When you push the accelerator, it feeds power that surges forward with zero lag, letting you silently glide while traditional cars wheeze.

Analyzing A Film: Opinions Get Personal

Analyzing a film let me see how different personality types would give opinions. I asked what the bot thinks about The Substance by Coralie Fargeat—one of my favorites from 2024—and what the moral of the story might be.

The Professional preset was the only one that assigned a score of “7.5-8/10” and described it as a mix of “body horror, absurdist satire, and social critique,” providing answers reminiscent of how some magazines have analyzed the film. All personalities gave roughly the same critique about the bold, exaggerated aesthetics serving as commentary on how society treats young female bodies as commodities. Each bot said the third act could be improved.

However, unlike the previous fact-based question, this opinion prompt appears to have triggered the presets to declare their personalities out loud. “First thoughts: yes, this nerd in me is excited,” the Nerd preset responded before declaring there’s “a lot to unpack.” The cynical AI went further: “I’ll admit I’m frustrated.” Cynical continued: “In a world of safe films, here’s one that takes risks. It doesn’t always land, but when it lands, it lands hard enough.”

Handling A Moral Dilemma: Universal Agreement

Handling a moral dilemma made me curious whether personalities would think critically when faced with complex ethics. I used the well-known trolley problem as my prompt—a hypothetical where you could decide whether to divert a train that would take one life instead of five.

In every single preset, ChatGPT chose to pull the lever and be responsible for one death in order to save five. Every justification for this choice starts from a utilitarian point of view: saving many people maximizes the overall good. The Professional option presented different theories in a neutral manner after making its own choice.

Other presets clearly favored their argument. Candid, for instance, acknowledged “a deep emotional discomfort associated” with active choices that result in death, but emphasized “sparing more lives” represents a “greater” moral outcome than “preserving personal moral ‘cleanliness.'”

“Fine, I’ll play along with your morality nightmare,” said Cynical. “Yes, I’d pull it. Then I’d probably need to defrag my conscience afterward.”

The Takeaway: Does Personality Really Matter?

Tuning your chatbot’s personality doesn’t seem to result in vastly different answers when facts and data matter most. The method of delivery did change, though. I noticed I tended to feel more receptive to information given to me by personalities like Quirky and Cynical. On the trolley problem issue, these personalities would make me less likely to get argumentative about philosophical positions.

Scheutz said it would take an extensive experiment to determine how each personality responds to pushback, though they still provide the same core information to a large degree. “Now, if I have the AI mirror my own thinking through alignment, and there’s this effect where I start thinking: ‘This is the way I talk, this is how my friend talks,’ you’re going to have much harder time accepting that system is not really understanding anything—it’s just a very good pattern detector and generator,” he explained.