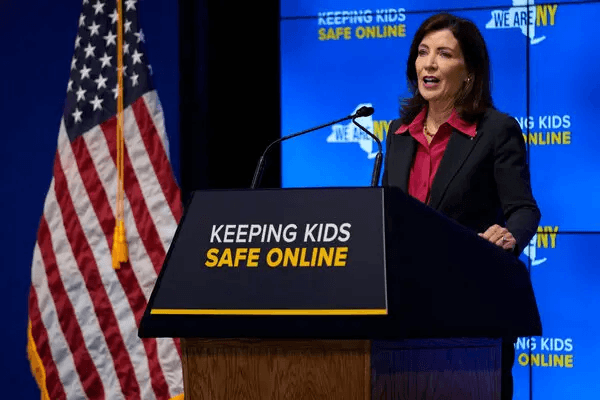

New York State Attorney General Letitia James proposed groundbreaking social media regulations requiring age verification for users under 18 on TikTok, Instagram, and other platforms. These guidelines, introduced September 2024, aim to protect kids from addictive digital experiences that damage mental health.

The Stop Addictive Feeds Exploitation (SAFE) for Kids Act represents a critical step toward addressing the youth mental health crisis plaguing America’s teenagers. As someone who grew up completely immersed in the internet, I understand both the allure and dangers of unlimited screen time. My generation served as unwitting test subjects for social media companies experimenting with algorithms designed to maximize engagement at any cost.

The Personal Cost of Growing Up Digital

I was 11 years old when I first created a Facebook account after transferring to a new school. What started as innocent curiosity quickly became an addiction that would shape my teenage years in ways I’m still understanding today. The platform felt like a secret place where all the grownups were, and being there made me feel sophisticated beyond my actual age.

Those early days on Facebook were deceptively simple – I became a fan of dozens of pointless pages, tended virtual crops on FarmVille, and fretted about which overedited photo to use as my profile picture. But even as a preteen, I instinctively knew that having the perfect online presence was part of the social hierarchy of middle and high school. Every like, comment, and tag became a source of gratification, especially when the “right people” were the ones interacting with my content.

The Evolution of Digital Dependency

As I got older, my preferred platforms changed. Soon I was posting selfies on Instagram and divulging secrets on Tumblr, spending even more time online as I chased internet fame. I would scroll for hours into the early morning, messaging boys at my school with hopes that one would develop a crush on me, and trying to talk up the popular girls so they would let me into their circle.

The sophisticated algorithms of today’s digital platforms make my experience look primitive. Artificial intelligence has become commonplace across multiple platforms, creating a Pandora’s box of ethical dilemmas. Content that was once difficult to find is now easily accessible, and personalized feeds are tailored precisely to each user’s interests and habits. The recent viral video of Charlie Kirk being assassinated on X demonstrates just how troubling and easily accessible disturbing content has become.

Why New York’s Approach Matters

New York’s proposed regulations aren’t perfect, but they represent a badly needed first step. The SAFE for Kids Act, which passed in 2024 and has yet to take effect, will keep children from receiving personalized feeds and notifications between midnight and 6 a.m. unless they receive parental consent. Implementation will be difficult – verifying young people via government-issued identification only works if they have a form of ID, which most people don’t have until they’re at least 15.

There’s also the possibility that social media companies will confirm a person’s age by having them submit a selfie or video for analysis, which could be easily manipulated or flat-out incorrect. Despite these concerns, I’m glad New York is trying to do something about children’s social media consumption. The screening and compliance measures, while imperfect, signal a new direction in how we tackle the digital challenges facing today’s youth.

The Mental Health Connection

By age 17, I was diagnosed with depression. While my mental illness wasn’t directly caused by my social media use, it certainly didn’t help things. I knew even then that the huge feelings swirling in my brain were exacerbated by my screen time, but I couldn’t stop scrolling. My baseline mood had shifted to persistent sadness, and the constant comparison and validation-seeking behaviors that digital platforms encourage had become deeply ingrained habits.

Today’s teenagers have even more to worry about than my generation did. The algorithms have only grown more sophisticated to the point that every popular platform is designed to keep users hooked through carefully engineered psychological manipulation. The notifications, trending topics, and viral content create an environment where teenagers feel constantly compelled to check, post, and interact with their digital presence.

What Other States Should Learn

As a child of the internet, I know how much I would have hated guidelines like New York’s when I was younger. I also know how badly they’re needed. Other states should follow New York’s example and implement similar regulations that prioritize children’s mental health over corporate profits. The verification process may be imperfect, but it’s a start toward protecting kids from the same digital pitfalls that shaped my teenage years.

The social hierarchy that once existed primarily in school hallways has expanded into a 24/7 digital environment where young people can never truly escape the pressure to perform, compete, and seek validation. Parents need tools to protect their children, and social media companies need accountability for the addictive products they create. New York’s bold step toward age verification and feed restrictions provides a blueprint other states can follow to address this crisis.